If you have been following my blog (Who hasn’t?), you know that I am writing on the topic of evaluation quality, the theme of the 2010 annual conference of the American Evaluation Association taking place November 10-13. It is a serious subject. Really.

But here is a joke, though perhaps only the evaluarati (you know who you are) will find it amusing.

- A quantitative evaluator, a qualitative evaluator, and a normal person are waiting for a bus. The normal person suddenly shouts, “Watch out, the bus is out of control and heading right for us! We will surely be killed!”

Without looking up from his newspaper, the quantitative evaluator calmly responds, “That is an awfully strong causal claim you are making. There is anecdotal evidence to suggest that buses can kill people, but the research does not bear it out. People ride buses all the time and they are rarely killed by them. The correlation between riding buses and being killed by them is very nearly zero. I defy you to produce any credible evidence that buses pose a significant danger. It would really be an extraordinary thing if we were killed by a bus. I wouldn’t worry.”

Dismayed, the normal person starts gesticulating and shouting, “But there is a bus! A particular bus! That bus! And it is heading directly toward some particular people! Us! And I am quite certain that it will hit us, and if it hits us it will undoubtedly kill us!”

At this point the qualitative evaluator, who was observing this exchange from a safe distance, interjects, “What exactly do you mean by bus? After all, we all construct our own understanding of that very fluid concept. For some, the bus is a mere machine, for others it is what connects them to their work, their school, the ones they love. I mean, have you ever sat down and really considered the bus-ness of it all? It is quite immense, I assure you. I hope I am not being too forward, but may I be a critical friend for just a moment? I don’t think you’ve really thought this whole bus thing out. It would be a pity to go about pushing the sort of simple linear logic that connects something as conceptually complex as a bus to an outcome as one dimensional as death.”

Very dismayed, the normal person runs away screaming, the bus collides with the quantitative and qualitative evaluators, and it kills both instantly.

Very, very dismayed, the normal person begins pleading with a bystander, “I told them the bus would kill them. The bus did kill them. I feel awful.”

To which the bystander replies, “Tut tut, my good man. I am a statistician and I can tell you for a fact that with a sample size of 2 and no proper control group, how could we possibly conclude that it was the bus that did them in?”

To the extent that this is funny (I find it hilarious, but I am afraid that I may share Sir Isaac Newton’s sense of humor) it is because it plays on our stereotypes about the field. Quantitative evaluators are branded as aloof, overly logical, obsessed with causality, and too concerned with general rather than local knowledge. Qualitative evaluators, on the other hand, are suspect because they are supposedly motivated by social interaction, overly intuitive, obsessed with description, and too concerned with local knowledge. And statisticians are often looked upon as the referees in this cat-and-dog world, charged with setting up and arbitrating the rules by which evaluators in both camps must (or must not) play.

The problem with these stereotypes, like all stereotypes, is that they are inaccurate. Yet we cling to them and make judgments about evaluation quality based upon them. But what if we shift our perspective to that of the (tongue in cheek) normal person? This is not an easy thing to do if, like me, you spend most of your time inside the details of the work and the debates of the profession. Normal people want to do the right thing, feel the need to act quickly to make things right, and hope to be informed by evaluators and others who support their efforts. Sometimes normal people are responsible for programs that operate in particular local contexts, and at others they are responsible for policies that affect virtually everyone. How do we help normal people get what they want and need?

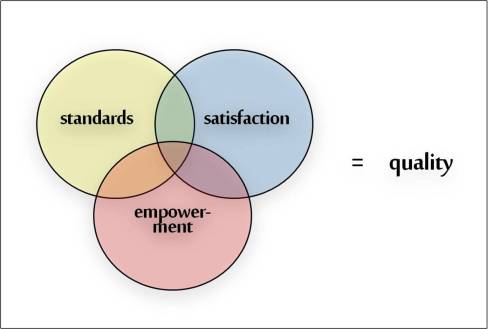

I have been arguing that we should, and when we do we have met one of my three criteria for quality—satisfaction. The key is first to acknowledge that we serve others, and then to do our best to understand their perspective. If we are weighed down by the baggage of professional stereotypes, it can prevent us from choosing well from among all the ways we can meet the needs of others. I suppose that stereotypes can be useful when they help us laugh at ourselves, but if we come to believe them, our practice can become unaccommodatingly narrow and the people we serve—normal people—will soon begin to run away (screaming) from us and the field. That is nothing to laugh at.