Did you miss the Catapult Labs conference on May 19? Then you missed something extraordinary.

But don’t worry, you can get the recap here.

The event was sponsored by Catapult Design, a nonprofit firm in San Francisco that uses the process and products of design to alleviate poverty in marginalized communities. Their work spans the worlds of development, mechanical engineering, ethnography, product design, and evaluation.

That is really, really cool.

I find them remarkable and their approach refreshing. Even more so because they are not alone. The conference was very well attended by diverse professionals—from government, the nonprofit sector, the for-profit sector, and design—all doing similar work.

The day was divided into three sets of three concurrent sessions, each presented as hands-on labs. So, sadly, I could attend only one third of what was on offer. My apologies to those who presented and are not included here.

I started the day by attending Democratizing Design: Co-creating With Your Users presented by Catapult’s Heather Fleming. It provided an overview of techniques designers use to include stakeholders in the design process.

Evaluators go to great lengths to include stakeholders. We have broad, well-established approaches such as empowerment evaluation and participatory evaluation. But the techniques designers use are largely unknown to evaluators. I believe there is a great deal we can learn from designers in this area.

An example is games. Heather organized a game in which we used beans as money. Players chose which crops to plant, each with its own associated cost, risk profile, and potential return. The expected payoff varied by gender, which was arbitrarily assigned to players. After a few rounds the problem was clear—higher costs, lower returns, and greater risks for women increased their chances of financial ruin, and this had negative consequences for communities.

I believe that evaluators could put games to good use. Describing a social problem as a game requires stakeholders to express their cause-and-effect assumptions about the problem. Playing with a group allows others to understand those assumptions intimately, comment upon them, and offer suggestions about how to solve the problem within the rules of the game (or perhaps change the rules to make the problem solvable).

I have never met a group of people who were more sincere in their pursuit of positive change. And honest in their struggle to evaluate their impact. I believe that impact evaluation is an area where evaluators have something valuable to share with designers.

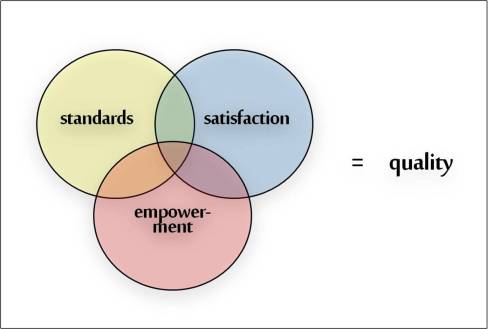

That was the purpose of my workshop Measuring Social Impact: How to Integrate Evaluation & Design. I presented a number of techniques and tools we use at Gargani + Company to design and evaluate programs. They are part of a more comprehensive program design approach that Stewart Donaldson and I will be sharing this summer and fall in workshops and publications (details to follow).

The hands-on format of the lab made for a great experience. I was able to watch participants work through the real-world design problems that I posed. And I was encouraged by how quickly they were able to use the tools and techniques I presented to find creative solutions.

That made my task of providing feedback on their designs a joy. We shared a common conceptual framework and were able to speak a common language. Given the abstract nature of social impact, I was very impressed with that—and their designs—after less than 90 minutes of interaction.

I wrapped up the conference by attending Three Cups, Rosa Parks, and the Polar Bear: Telling Stories that Work presented by Melanie Moore Kubo and Michaela Leslie-Rule from See Change. They use stories as a vehicle for conducting (primarily) qualitative evaluations. They call it story science. A nifty idea.

I liked this session for two reasons. First, Melanie and Michaela are expressive storytellers, so it was great fun listening to them speak. Second, they posed a simple question—Is this story true?—that turns out to be amazingly complex.

We summarize, simplify, and translate meaning all the time. Those of us who undertake (primarily) quantitative evaluations agonize over this because our standards for interpreting evidence are relatively clear but our standards for judging the quality of evidence are not.

For example, imagine that we perform a t-test to estimate a program’s impact. The t-test indicates that the impact is positive, meaningfully large, and statistically significant. We know how to interpret this result and what story we should tell—there is strong evidence that the program is effective.

But what if the outcome measure was not well aligned with the program’s activities? Or there were many cases with missing data? Would our story still be true? There is little consensus on where to draw the line between truth and fiction when quantitative evidence is flawed.

As Melanie and Michaela pointed out, it is critical that we strive to tell stories that are true, but equally important to understand and communicate our standards for truth. Amen to that.

The icing on the cake was the conference evaluation. Perhaps the best conference evaluation I have come across.

Everyone received four post-it notes, each a different color. As a group, we were given a question to answer on a post-it of a particular color, and only a minute to answer the question. Immediately afterward, the post-its were collected and displayed for all to view, as one would view art in a gallery.

Evaluation as art—I like that. Immediate. Intimate. Transparent.

Gosh, I like designers.