The AEA conference has been great. I have been very impressed with the presentations that I have attended so far, though I can’t claim to have seen the full breadth of what is on offer as there are roughly 700 presentations in total. Here are a few that impressed me the most. Continue reading

Category Archives: Program Evaluation

AEA 2010 Conference Kicks Off in San Antonio

In the opening plenary of the Evaluation 2010 conference, AEA President Leslie Cooksy invited three leaders in the field—Eleanor Chelimsky, Laura Leviton, and Michael Patton– to speak on The Tensions Among Evaluation Perspectives in the Age of Obama: Influences on Evaluation Quality, Thinking and Values. They covered topics ranging from how government should use evaluation information to how Jon Stewart of the Daily Show outed himself as an evaluator during his Rally to Restore Sanity/Fear (“I think you know that the success or failure of a rally is judged by only two criteria; the intellectual coherence of the content, and its correlation to the engagement—I’m just kidding. It’s color and size. We all know it’s color and size.”)

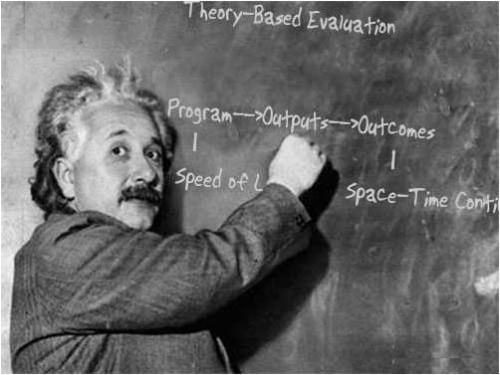

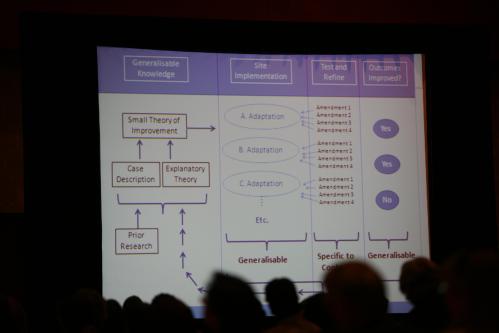

One piece that resonated with me was Laura Leviton’s discussion of how the quality of an evaluation is related to our ability to apply its results to future programs—what is referred to as generalization. She presented a graphic that described a possible process for generalization that seemed right to me; it’s what should happen. But how it happens was not addressed, at least in the short time in which she spoke. It is no small task to gather prior research and evaluation results, translate them into a small theory of improvement (a program theory), and then adapt that theory to fit specific contexts, values, and resources. Who should be doing that work? What are the features that might make it more effective?

Stewart Donaldson and I recently co-authored a paper on that topic that will appear in New Directions for Evaluation in 2011. We argue that stakeholders are and should be doing this work, and we explore how the logic underlying traditional notions of external validity—considered by some to be outdated—can be built upon to create a relatively simple, collaborative process for predicting the future results of programs. The paper is a small step toward raising the discussion of external validity (how we judge whether a program will work in the future) to the same level as the discussion of internal validity (how we judge whether a program worked in the past), while trying to avoid the rancor that has been associated with the latter.

More from the conference later.

Filed under AEA Conference, Evaluation Quality, Gargani News, Program Evaluation

Good versus Eval

After another blogging hiatus, the battle between good and eval continues. Or at least my blog is coming back online as the American Evaluation Association’s Annual Conference in San Antonio (November 10-14) quickly approaches.

I remember that twenty years ago evaluation was widely considered the enemy of good because it took resources away from service delivery. Now evaluation is widely considered an essential part of service delivery, but the debate over what constitutes a good program and a good evaluation continues. I will be joining the fray when I make a presentation as part of a session entitled Improving Evaluation Quality by Improving Program Quality: A Theory-Based/Theory-Driven Perspective (Saturday, November 13, 10:00 AM, Session Number 742). My presentation is entitled The Expanding Profession: Program Evaluators as Program Designers, and I will discuss how program evaluators are increasingly being called upon to help design the programs they evaluate, and why that benefits program staff, stakeholders, and evaluators. Stewart Donaldson is my co presenter (The Relationship between Program Design and Evaluation), and our discussants are Michael Scriven, David Fetterman, and Charles Gasper. If you know these names, you know to expect a “lively” (OK, heated) discussion.

If you are an evaluator in California, Oregon, Washington, New Mexico, Hawaii, any other place west of the Mississippi, or anywhere that is west of anything, be sure to attend the West Coast Evaluators Reception Thursday, November 11, 9:00 pm at the Zuni Grill (223 Losoya Street, San Antonio, TX 78205) co-sponsored by San Francisco Bay Area Evaluators and Claremont Graduate University. It is a conference tradition and a great way to network with colleagues.

More from San Antonio next week!

Filed under Design, Evaluation Quality, Gargani News, Program Design, Program Evaluation

Quality is a Joke

If you have been following my blog (Who hasn’t?), you know that I am writing on the topic of evaluation quality, the theme of the 2010 annual conference of the American Evaluation Association taking place November 10-13. It is a serious subject. Really.

But here is a joke, though perhaps only the evaluarati (you know who you are) will find it amusing.

- A quantitative evaluator, a qualitative evaluator, and a normal person are waiting for a bus. The normal person suddenly shouts, “Watch out, the bus is out of control and heading right for us! We will surely be killed!”

Without looking up from his newspaper, the quantitative evaluator calmly responds, “That is an awfully strong causal claim you are making. There is anecdotal evidence to suggest that buses can kill people, but the research does not bear it out. People ride buses all the time and they are rarely killed by them. The correlation between riding buses and being killed by them is very nearly zero. I defy you to produce any credible evidence that buses pose a significant danger. It would really be an extraordinary thing if we were killed by a bus. I wouldn’t worry.”

Dismayed, the normal person starts gesticulating and shouting, “But there is a bus! A particular bus! That bus! And it is heading directly toward some particular people! Us! And I am quite certain that it will hit us, and if it hits us it will undoubtedly kill us!”

At this point the qualitative evaluator, who was observing this exchange from a safe distance, interjects, “What exactly do you mean by bus? After all, we all construct our own understanding of that very fluid concept. For some, the bus is a mere machine, for others it is what connects them to their work, their school, the ones they love. I mean, have you ever sat down and really considered the bus-ness of it all? It is quite immense, I assure you. I hope I am not being too forward, but may I be a critical friend for just a moment? I don’t think you’ve really thought this whole bus thing out. It would be a pity to go about pushing the sort of simple linear logic that connects something as conceptually complex as a bus to an outcome as one dimensional as death.”

Very dismayed, the normal person runs away screaming, the bus collides with the quantitative and qualitative evaluators, and it kills both instantly.

Very, very dismayed, the normal person begins pleading with a bystander, “I told them the bus would kill them. The bus did kill them. I feel awful.”

To which the bystander replies, “Tut tut, my good man. I am a statistician and I can tell you for a fact that with a sample size of 2 and no proper control group, how could we possibly conclude that it was the bus that did them in?”

To the extent that this is funny (I find it hilarious, but I am afraid that I may share Sir Isaac Newton’s sense of humor) it is because it plays on our stereotypes about the field. Quantitative evaluators are branded as aloof, overly logical, obsessed with causality, and too concerned with general rather than local knowledge. Qualitative evaluators, on the other hand, are suspect because they are supposedly motivated by social interaction, overly intuitive, obsessed with description, and too concerned with local knowledge. And statisticians are often looked upon as the referees in this cat-and-dog world, charged with setting up and arbitrating the rules by which evaluators in both camps must (or must not) play.

The problem with these stereotypes, like all stereotypes, is that they are inaccurate. Yet we cling to them and make judgments about evaluation quality based upon them. But what if we shift our perspective to that of the (tongue in cheek) normal person? This is not an easy thing to do if, like me, you spend most of your time inside the details of the work and the debates of the profession. Normal people want to do the right thing, feel the need to act quickly to make things right, and hope to be informed by evaluators and others who support their efforts. Sometimes normal people are responsible for programs that operate in particular local contexts, and at others they are responsible for policies that affect virtually everyone. How do we help normal people get what they want and need?

I have been arguing that we should, and when we do we have met one of my three criteria for quality—satisfaction. The key is first to acknowledge that we serve others, and then to do our best to understand their perspective. If we are weighed down by the baggage of professional stereotypes, it can prevent us from choosing well from among all the ways we can meet the needs of others. I suppose that stereotypes can be useful when they help us laugh at ourselves, but if we come to believe them, our practice can become unaccommodatingly narrow and the people we serve—normal people—will soon begin to run away (screaming) from us and the field. That is nothing to laugh at.

Filed under Evaluation, Evaluation Quality, Program Evaluation

It’s a Gift to Be Simple

Theory-based evaluation acknowledges that, intentionally or not, all programs depend on the beliefs influential stakeholders have about the causes and consequences of effective social action. These beliefs are what we call theories, and they guide us when we design, implement, and evaluate programs.

Theories live (imperfectly) in our minds. When we want to clarify them for ourselves or communicate them to others, we represent them as some combination of words and pictures. A popular representation is the ubiquitous logic model, which typically takes the form of box-and-arrow diagrams or relational matrices.

The common wisdom is that developing a logic model helps program staff and evaluators develop a better understanding of a program, which in turn leads to more effective action.

Not to put too fine a point on it, this last statement is a representation of a theory of logic models. I represented the theory with words, which have their limits, yet another form of representation might reveal, hide, or distort different aspects of the theory. In this case, my theory is simple and my representation is simple, so you quickly get the gist of my meaning. Simplicity has its virtues.

It also has its perils. A chief criticism of logic models is that they fail to promote effective action because they are vastly too simple to represent the complexity inherent in a program, its participants, or its social value. This criticism has become more vigorous over time and deserves attention. In considering it, however, I find myself drawn to the other side of the argument, not because I am especially wedded to logic models, but rather to defend the virtues of simplicity. Continue reading

Filed under Commentary, Evaluation, Program Evaluation

Data-Free Evaluation

George Bernard Shaw quipped, “If all economists were laid end to end, they would not reach a conclusion.” However, economists should not be singled out on this account — there is an equal share of controversy awaiting anyone who uses theories to solve social problems. While there is a great deal of theory-based research in the social sciences, it tends to be more theory than research, and with the universe of ideas dwarfing the available body of empirical evidence, there tends to be little if any agreement on how to achieve practical results. This was summed up well by another master of the quip, Mark Twain, who observed that the fascinating thing about science is how “one gets such wholesale returns of conjecture out of such a trifling investment of fact.”

Recently, economists have been in the hot seat because of the stimulus package. However, it is the policymakers who depended on economic advice who are sweating because they were the ones who engaged in what I like to call data-free evaluation. This is the awkward art of judging the merit of untried or untested programs. Whether it takes the form of a president staunching an unprecedented financial crisis, funding agencies reviewing proposals for new initiatives, or individuals deciding whether to avail themselves of unfamiliar services, data-free evaluation is more the rule than the exception in the world of policies and programs. Continue reading

Filed under Commentary, Design, Evaluation, Program Design, Program Evaluation, Research

The Most Difficult Part of Science

I recently participated in a panel discussion at the annual meeting of the California Postsecondary Education Commission (CPEC) for recipients of Improving Teacher Quality Grants. We were discussing the practical challenges of conducting what has been dubbed scientifically-based research (SBR). While there is some debate over what types of research should fall under this heading, SBR almost always includes randomized trials (experiments) and quasi-experiments (close approximations to experiments) that are used to establish whether a program made a difference.

SBR is a hot topic because it has found favor with a number of influential funding organizations. Perhaps the most famous example is the US Department of Education, which vigorously advocates SBR and at times has made it a requirement for funding. The push for SBR is part of a larger, longer-term trend in which funders have been seeking greater certainty about the social utility of programs they fund.

However, SBR is not the only way to evaluate whether a program made a difference, and not all evaluations set out to do so (as is the case with needs assessment and formative evaluation). At the same time, not all evaluators want to or can conduct randomized trials. Consequently, the push for SBR has sparked considerable debate in the evaluation community. Continue reading

Continue reading

Filed under Commentary, Evaluation, Program Evaluation

Obama’s Inaugural Address Calls for More Evaluation

Today was historic and I was moved by its import. As I was soaking in the moment, one part of President Obama’s inaugural address caught my attention. There has been a great deal of discussion in the evaluation community about how an Obama administration will influence the field. He advocates a strong role for government and nonprofit organizations that serve the social good, but the economy is weak and tax dollars short. An oft repeated question was whether he would push for more evaluation or less. He seems to have provided and answer in his inaugural address:

The question we ask today is not whether our government is too big or too small, but whether it works – whether it helps families find jobs at a decent wage, care they can afford, a retirement that is dignified. Where the answer is yes, we intend to move forward. Where the answer is no, programs will end. And those of us who manage the public’s dollars will be held to account – to spend wisely, reform bad habits, and do our business in the light of day – because only then can we restore the vital trust between a people and their government.”

We have yet to learn Obama’s full vision for evaluation, especially the form it will take and how it will be used to improve government. But his statement seems to put him squarely in step with the bipartisan trend that emerged in the 1990s and has resulted in more-and more rigorous-evaluation. President Clinton took perhaps the first great strides in this direction, mandating evaluations of social programs in an effort to promote accountability and transparency. President Bush went further when many of the agencies under his charge developed a detailed (and controversial) working definition of evaluation as scientifically-based research. What will be Obama’s next step? Only time will tell.

Filed under Commentary, Evaluation, Program Evaluation

Theory Building and Theory-Based Evaluation

When we are convinced of something, we believe it. But when we believe something, we may not have been convinced. That is, we do not come by all our beliefs through conscious acts of deliberation. It’s a good thing, too, for if we examined the beliefs underlying our every action we wouldn’t get anything done.

When we design or evaluate programs, however, the beliefs underlying these actions do merit close examination. They are our rationale, our foothold in the invisible; they are what endow our struggle to change the world with possibility. Continue reading

Filed under Commentary, Design, Evaluation, Program Design, Program Evaluation, Research

Fruitility (or Why Evaluations Showing “No Effects” Are a Good Thing)

The mythical character Sisyphus was punished by the gods for his cleverness. As mythological crimes go, cleverness hardly rates and his punishment was lenient — all he had to do was place a large boulder on top of a hill and then he could be on his way.

The first time Sisyphus rolled the boulder to the hilltop I imagine he was intrigued as he watched it roll back down on its own. Clever Sisyphus confidently tried again, but the gods, intent on condemning him to an eternity of mindless labor, had used their magic to ensure that the rock always rolled back down.

Could there be a better way to punish the clever?

Perhaps not. Nonetheless, my money is on Sisyphus because sometimes the only way to get it right is to get it wrong. A lot.

This is the principle of fruitful futility, or as I call it fruitility. Continue reading →

Leave a comment

Filed under Commentary, Evaluation, Program Evaluation, Research

Tagged as fruitility, institute for education sciences, no effects, randomized trials, Sisyphus