In the opening plenary of the Evaluation 2010 conference, AEA President Leslie Cooksy invited three leaders in the field—Eleanor Chelimsky, Laura Leviton, and Michael Patton– to speak on The Tensions Among Evaluation Perspectives in the Age of Obama: Influences on Evaluation Quality, Thinking and Values. They covered topics ranging from how government should use evaluation information to how Jon Stewart of the Daily Show outed himself as an evaluator during his Rally to Restore Sanity/Fear (“I think you know that the success or failure of a rally is judged by only two criteria; the intellectual coherence of the content, and its correlation to the engagement—I’m just kidding. It’s color and size. We all know it’s color and size.”)

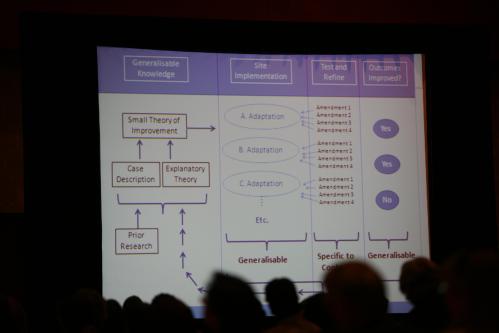

One piece that resonated with me was Laura Leviton’s discussion of how the quality of an evaluation is related to our ability to apply its results to future programs—what is referred to as generalization. She presented a graphic that described a possible process for generalization that seemed right to me; it’s what should happen. But how it happens was not addressed, at least in the short time in which she spoke. It is no small task to gather prior research and evaluation results, translate them into a small theory of improvement (a program theory), and then adapt that theory to fit specific contexts, values, and resources. Who should be doing that work? What are the features that might make it more effective?

Stewart Donaldson and I recently co-authored a paper on that topic that will appear in New Directions for Evaluation in 2011. We argue that stakeholders are and should be doing this work, and we explore how the logic underlying traditional notions of external validity—considered by some to be outdated—can be built upon to create a relatively simple, collaborative process for predicting the future results of programs. The paper is a small step toward raising the discussion of external validity (how we judge whether a program will work in the future) to the same level as the discussion of internal validity (how we judge whether a program worked in the past), while trying to avoid the rancor that has been associated with the latter.

More from the conference later.