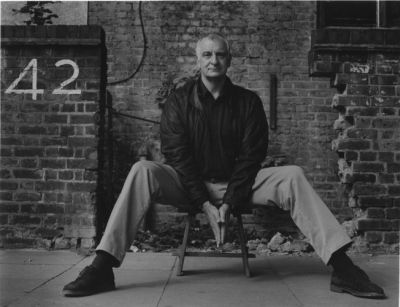

Douglas Adams

The late satirical author Douglas Adams spun a yarn about a society determined to discover the meaning of life. After millennia, they had developed a computer so powerful it could provide the answer. Gathering around on that long anticipated day, the people waited for the computer to reveal the answer. It was 42. Puzzled and more than a little angry, the people wanted to know how this could be. The computer responded that the answer to the big question of the meaning of life, the universe and everything was most definitely 42, but as it unfortunately turned out neither the computer nor the people knew exactly what the question was.

A similar fate can befall evaluations, more than one of which has produced a precise answer to a question never framed or a question framed so vaguely as to be useless. It is easy enough to avoid this fate when you realize that, at its most basic level, evaluations address only three big questions: Can it work? Did it work? Will it work again? We call them “Can,” “Do,” and “Will” for short. Of course, we can ask other questions, but they tend to be in support of or in response to the big three. What good is asking, for example, “How does it work?” before you believe that it can, did or will?

It is interesting to note the pretzel-like logic associated with these three simple questions. If, through the course of an evaluation, you conclude that a program did work, then you must also conclude that it can work. If you conclude that a program will work again, then — because of the precise way this is determined through an evaluation study — it turns out that you must also conclude that it did work and by extension that it can work. On the flip side, concluding that a program did not work in a specific instance does not imply that it cannot. More confusingly, concluding that a program will not work again is predicated on concluding that it did not work in a specific instance, but this does not imply that it cannot work.

While this may appear both logically tortuous and torturous, there is a highly practical point. The only way to arrive at an answer that you find useful is to ask the right question. The right question, however, must do more than be of interest to you; it must also fit the program, its stage of development, its context and your budget. For instance, you may want to know the answer to, “Will it work again?” But the answer to this question may be of little value if the program is still under development, likely to be modified substantially over the short term or intended to be replicated in a very different context or manner. Moreover, the cost and complexity of this sort of evaluation increases in a nonlinear way as you move from “Can” to “Will.” So much so that “Will it work again?” is rarely answered to a high level of rigor by evaluators.

The underlying principle is that a program should be sufficiently well developed and have sufficient promise to justify moving from “Can” to “Did,” or from “Did” to “Will.” Ironically, funders may demand a will-it-work-again evaluation or a did-it-work evaluation of a program that, given its current track record and state of development, is not ready to answer these questions. And programs under development rarely take advantage of relatively inexpensive can-it-work evaluations to build better programs. A good evaluator can help you navigate these perils and arrive at an answer — and a question — that matches the program and its context while meeting your needs (even if the answer turns out to be 42).

Click Icon to Subscribe

Click Icon to Subscribe

Asking Questions, Getting Answers

Douglas Adams

The late satirical author Douglas Adams spun a yarn about a society determined to discover the meaning of life. After millennia, they had developed a computer so powerful it could provide the answer. Gathering around on that long anticipated day, the people waited for the computer to reveal the answer. It was 42. Puzzled and more than a little angry, the people wanted to know how this could be. The computer responded that the answer to the big question of the meaning of life, the universe and everything was most definitely 42, but as it unfortunately turned out neither the computer nor the people knew exactly what the question was.

A similar fate can befall evaluations, more than one of which has produced a precise answer to a question never framed or a question framed so vaguely as to be useless. It is easy enough to avoid this fate when you realize that, at its most basic level, evaluations address only three big questions: Can it work? Did it work? Will it work again? We call them “Can,” “Do,” and “Will” for short. Of course, we can ask other questions, but they tend to be in support of or in response to the big three. What good is asking, for example, “How does it work?” before you believe that it can, did or will?

It is interesting to note the pretzel-like logic associated with these three simple questions. If, through the course of an evaluation, you conclude that a program did work, then you must also conclude that it can work. If you conclude that a program will work again, then — because of the precise way this is determined through an evaluation study — it turns out that you must also conclude that it did work and by extension that it can work. On the flip side, concluding that a program did not work in a specific instance does not imply that it cannot. More confusingly, concluding that a program will not work again is predicated on concluding that it did not work in a specific instance, but this does not imply that it cannot work.

While this may appear both logically tortuous and torturous, there is a highly practical point. The only way to arrive at an answer that you find useful is to ask the right question. The right question, however, must do more than be of interest to you; it must also fit the program, its stage of development, its context and your budget. For instance, you may want to know the answer to, “Will it work again?” But the answer to this question may be of little value if the program is still under development, likely to be modified substantially over the short term or intended to be replicated in a very different context or manner. Moreover, the cost and complexity of this sort of evaluation increases in a nonlinear way as you move from “Can” to “Will.” So much so that “Will it work again?” is rarely answered to a high level of rigor by evaluators.

The underlying principle is that a program should be sufficiently well developed and have sufficient promise to justify moving from “Can” to “Did,” or from “Did” to “Will.” Ironically, funders may demand a will-it-work-again evaluation or a did-it-work evaluation of a program that, given its current track record and state of development, is not ready to answer these questions. And programs under development rarely take advantage of relatively inexpensive can-it-work evaluations to build better programs. A good evaluator can help you navigate these perils and arrive at an answer — and a question — that matches the program and its context while meeting your needs (even if the answer turns out to be 42).

Share this:

Related

Leave a comment

Filed under Commentary, Elements of Evaluation, Research

Tagged as douglas adams, questions for evaluators